The 2021 Google I/O keynote was streamed live from the company’s Mountain View campus this morning, looking very different from previous years. Even though the event is virtual this year, the live keynote covered several upcoming Google technologies and updates for the thousands tuning in on YouTube. In case you missed it, here’s a quick overview of what the tech giant covered this morning.

Estimated reading time: 8 minutes

Sundar Pichai, CEO of Alphabet and Google, started by covering the past year and some of the ways the company helped teachers, students, and other people connected and informed during the pandemic. Some of the new features coming soon have already been announced, like eco-friendly and indoor routing in Google Maps.

Google Workspace

Over the past year, work is no longer just a place with so many more people working from home. Chromebooks and Google’s collaboration tools like Google Workspace and Meet have helped keep people connected. Smart Canvas is the latest addition to Google Workspace. The new feature will allow distributed teams to better plan projects with tasks, tracking, and next steps.

Search

Search is important, and AI is making it even better, giving people the results they need quicker and more accurately. Over the past few years, features like Google Lens have become a key component of search and is used over 3 billion times each month. Live Caption on Android, another recent feature which captions just about any content on your Android device, generates 250,000 hours of transcriptions each day.

LaMDA allows for even more natural conversations when searching. Google showed examples of conversations with Pluto and a paper airplane, all using LaMDA and non-scripted answers. In other words, this feature uses AI learning to provide responses that keep the conversation open-ended and natural. Still in the research stage, this technology needs some more work as it does provide the occasional inaccurate response — like Pluto enjoying playing with its favourite ball, the Moon. At the moment, LaMDA is only trained using text, but Google is working towards multi-modal input like text, images, and voice. Some examples shown include asking Google to find a route with nice mountain views or even showing a user the part where a lion roars at the sun in a YouTube video.

Quantum Computing and Cubits

Next up, Pichai talked about TPU v4, Quantum Computing, and Cubits and how much faster computing is becoming. A bit over my head, but you can catch it in the video below if you wish.

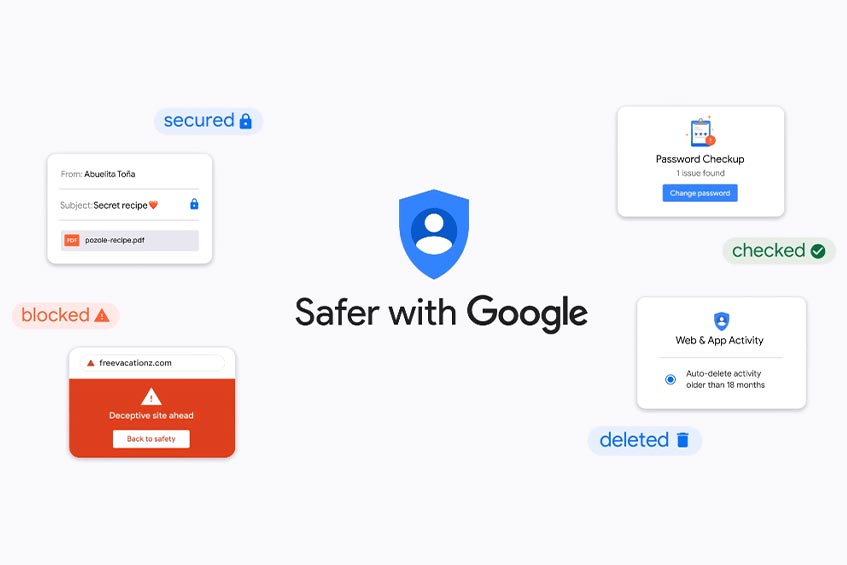

Security and privacy

All Google accounts are now set to auto-delete your Web & App Activity after 18 months unless you choose otherwise. You can find this in your Google account settings under Activity Controls. Google products are secure by default, private by design, with the user in control.

With the number of hacking attempts and the fact that two-thirds of people use the same password across different accounts, the Google password manager is getting smarter with four new upgrades. These new features include a password importer, deeper Chrome/Android integration, automatic Password Alerts for when passwords become compromised, and a quick fix feature in Chrome allowing you to update a compromised password much faster.

Google is also working on more security features and making it easier to access privacy and security settings. Another new feature is Locked Folder in Photos, rolling out first on Google Pixels. Photos added to this space are password protected and won’t show up in Google Photos when scrolling through, or any other apps on your Android device.

Multitask Unified Model (MUM)

Next up, Prabhakar Raghavan (Senior Vice President at Google) took the Google I/O stage to talk about advances in AI to help better understand the world in Search, Google Maps, and shopping. Google’s new Multitask Unified Model (MUM) has the goal of making information even more accessible and useful. This new model can acquire deep world knowledge, generate language, train on 75+ languages (instead of just one at a time), and understand multiple modalities simultaneously. By being able to multitask, results are generated faster and will be more useful. One example of real-world use is scanning a science problem with Google Lens then getting helpful resources in the language of your choosing.

Improved Search Source results

Google’s search source box will be getting more improvements, including information like when it was first indexed by Google, what other sources are saying about the source in the Knowledge Box as well as what the source says about itself. Other enhancements to the search source results are coming later this year.

Google Maps

Over 100 new improvements to be released this year with AI enhancements. Improvements to Live View will add more AR features like sign overlays, landmarks, and indoor navigation. Street maps will be adding sidewalks, crosswalks, and other key features for more granular details. Maps will also adjust display views based on the time of day. In the morning, for example, coffee shops might be more prominently displayed, while in the evening, restaurants will take prominence.

Shopping

Shopping Graph builds on Google’s Knowledge Graph to offer more detailed information like SKUs, images, product details, and more. This new feature will help consumers connect quicker to retailers to purchase the products they want. Google Photos will also allow you to search within photos to get more information on, for example, a pair of shoes that someone in the picture is wearing. Coming soon, loyalty options will be added to Google Shopping, offering users the best price based on their loyalty cards with various retailers.

Google Photos

The big news lately with Google Photos is the fact that their free storage tier will be disappearing on June 1st. That being said, the company has announced even better search and memory results using AI. “Little patterns” searches photos and returns results based on patterns within each photo. For example, a memory with a number of photos containing backpacks or your family on a couch over time can be shown to users using little patterns.

Many people take two or three photos to get the best shot. When Google finds multiple similar photos, it can apply machine learning to create a Cinematic Photo. In other words, AI will synthesize movement between two or three photos to create a moving photo.

More controls allow users to hide images of a certain person (bad breakup), a certain moment (tragedy), or other painful memories. This feature will prevent painful memories from surfacing, and users will even have the option of removing these photos completely much easier.

Material You

Matias Duarte, Google’s VP of Design, took the stage to talk about design across Google’s computing products. While Material Design works great for Android, computing functions are showing up in more places and screens. Google’s new design focuses on customization for the user and is dubbed Material You. This new format allows users to customize colours and size of elements which will then carry across different devices that user may be using and logged into.

Material You will come to Google Pixel devices in the fall, with the web, Chrome OS, wearables, and other displays following next year.

Android 12

The key focus with any Google I/O is the upcoming version of Anroid. In addition to redesigned widgets and Material You, Android 12 is Google’s “most personal OS ever.” Sameer Samat, VP of Product Management, talked about how there are more than 3 billion active Android devices around the world. According to Samat, Android 12 is their most ambitious update ever. For the upcoming OS, Google focused on the fact that smartphones are deeply personal, should be private and secure, and making all your devices work better together with your phone.

With Android 12, everything has been revamped from the lockscreen to settings. To start, custom palettes are created by the operating system when you change your wallpaper, applying different hues across the Android device. In addition to visual re-designs, interactions are simpler and easier to use. Quick Settings now included Google Pay and other frequently used shortcuts. Code improvements make for smooth transitions and faster overall performance.

The new Privacy Dashboard lets you know what permission was last used and by what app. Even better, users can easily revoke permissions directly from the Privacy Dashboard. Two quick settings have been added as well to toggle camera and microphone privacy across all apps on the device.

Samat went on to talk about current cross-device functionality like interactions between your smartphone, Chromebooks, and even Android Auto. Some new features coming to Android included built in TV remote functionality, wireless Android Auto, digital car key (on select Pixel and Samsung devices), and more.

Starting today, you can try out the Android 12 Beta on devices from 11 different manufacturers, including Google, Oppo, Xiami, OnePlus, TCL, ZTE, and more.

Wear OS

Google introduced the “biggest update to Wear OS ever.” Focusing on a unified platform, the company worked with Samsung to focus on battery life, performance, and to make it easier for developers to create apps. A new customer experience alongside world-class and health features based on their acquisition of Fitbit round out the key updates to the wearable OS.

With Samsung, Wear OS combines features from Samsung’s Tizen wearable OS and offers better battery life, faster performance (apps start up to 30% faster), and a thriving developer community. Even though the two companies are spearheading this, Wear OS will still be available to all Android wearable manufacturers. More end-user enhancements are coming as well, including more Tiles and an easier navigation experience. Fitbit’s health expertise will also be integrated into Wear OS for better health and fitness tracking.

If you’d like, you can watch the event in the video below.

What do you think about the 2021 Google I/O keynote? What were you most excited to hear? Let us know on Twitter or MeWe.