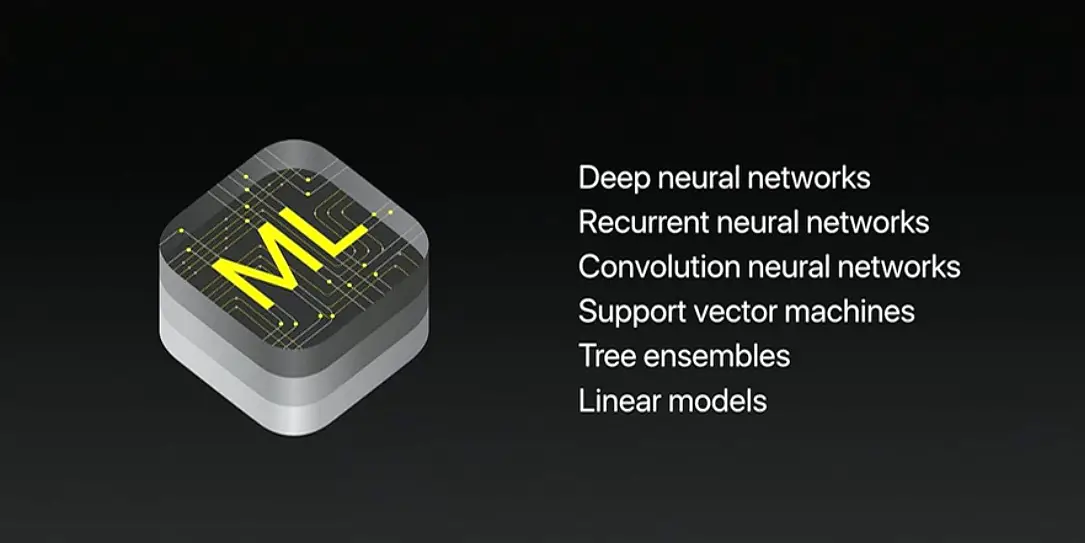

One of Apple’s new technologies is called CoreML. CoreML is Apple’s jump into machine learning and it’s currently in beta but expected to come to iOS 11 in September. Other companies, like Google, are also experimenting with machine learning and it is a tech that still has a growth curve to overcome. As with any beta software, developers are out there actively trying to make apps and tinkering around with the abilities of CoreML.

One video has surfaced, via Reddit, that shows an app identifying objects almost instantly using Apple’s CoreML technology. Curiously, the person who posted the video credited Google’s Vision API but other redditors quickly corrected that mistake. Take a look at the video below.

As you can see from the video, the app was able to identify the objects on the table quickly and accurately. The one caveat here is, the app must be taught first which means loading the models into it then the app can identify. All of this is happening offline within the app so there are no API’s to reach out to. This is just a small demo of what the technology could do and we’re expecting other developers to start showing off their works so we can see what CoreML is really capable of.

Supported features include face tracking, face detection, landmarks, text detection, rectangle detection, barcode detection, object tracking, and image registration.

As Apple, Google, Microsoft, and others move forward with machine learning. It will be interesting to see which one of them start offering the more alluring platforms and who will end up getting the top spot.

What do you think of this story? Let us know in the comments below or on Google+, Twitter, or Facebook.

Thanks to reader James Miller for tipping us off to this video.

[button link=”https://www.reddit.com/r/videos/comments/6iv4wg/googles_new_vision_api_is_unbelievably_fast/#bottom-comments” icon=”fa-external-link” side=”left” target=”blank” color=”285b5e” textcolor=”ffffff”]Source: Reddit[/button]