Grainy photos aren’t pleasing to anyone, from pro photographers to the average person. You’ll be hard pressed to find someone who’d say a grainy photo looks great. NVIDIA, of all companies, is trying to make your grainy photo blues a bit brighter. Researchers at NVIDIA, Aalto University, and MIT are developing AI technology that could remove the grain from grainy photos. NVIDIA says the technology learns to correct the grainy or pixelated photos by analyzing the grainy photo itself.

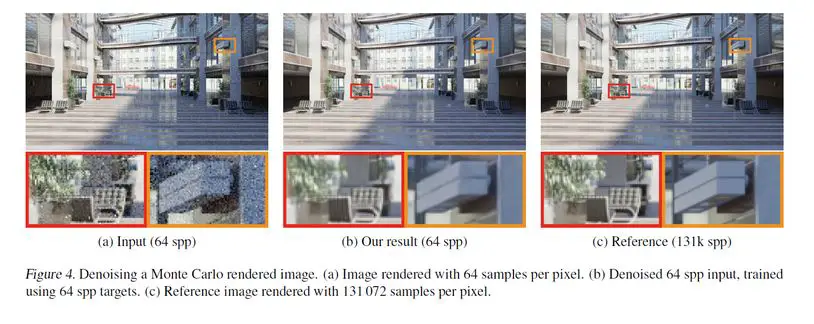

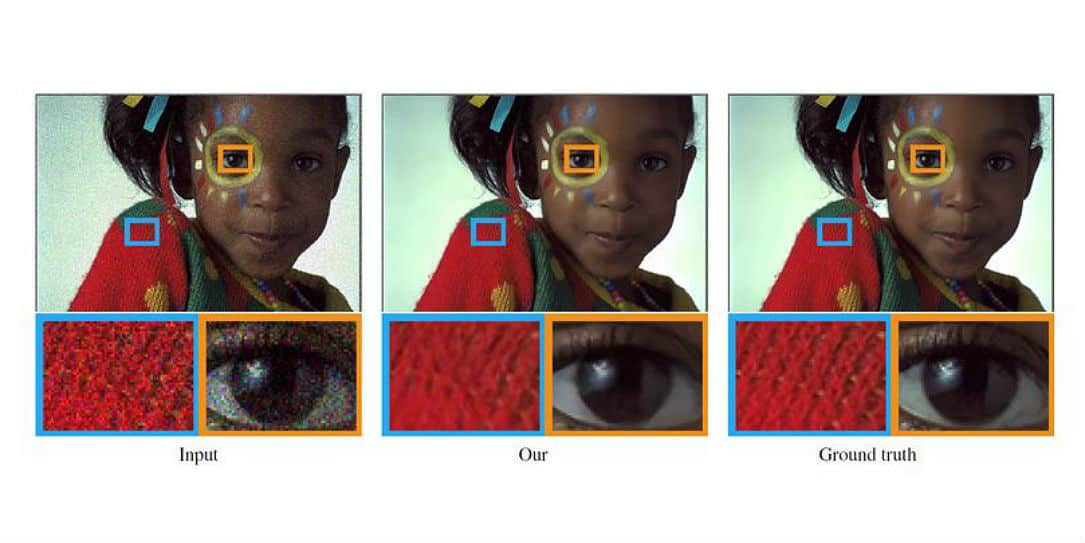

Recent deep learning work in the field has focused on training a neural network to restore images by showing example pairs of noisy and clean images. The AI then learns how to make up the difference. This method differs because it only requires two input images with the noise or grain.

Without ever being shown what a noise-free image looks like, this AI can remove artifacts, noise, grain, and automatically enhance your photos.

“It is possible to learn to restore signals without ever observing clean ones, at performance sometimes exceeding training using clean exemplars,” the researchers stated in their paper.“[The neural network] is on par with state-of-the-art methods that make use of clean examples — using precisely the same training methodology, and often without appreciable drawbacks in training time or performance.”

Using NVIDIA Tesla P100 GPUs with the cuDNN-accelerated TensorFlow deep learning framework, the team trained their system on 50,000 images in the ImageNet validation set.

“There are several real-world situations where obtaining clean training data is difficult: low-light photography (e.g., astronomical imaging), physically-based rendering, and magnetic resonance imaging,” the team said. “Our proof-of-concept demonstrations point the way to significant potential benefits in these applications by removing the need for potentially strenuous collection of clean data. Of course, there is no free lunch – we cannot learn to pick up features that are not there in the input data – but this applies equally to training with clean targets.”

The NVIDIA team is heading to the ICML conference to present their work on Thursday, July 12th. It’s pretty interesting stuff and we look forward to seeing more.

What do you think of this latest AI technology being used to denoise photos? Let us know in the comments below or on Google+, Twitter, or Facebook.

[button link=”https://news.developer.nvidia.com/ai-can-now-fix-your-grainy-photos-by-only-looking-at-grainy-photos/” icon=”fa-external-link” side=”left” target=”blank” color=”285b5e” textcolor=”ffffff”]Source: NVIDIA News Center[/button]