While Adobe Photoshop has had content-aware fill — which modifies regions of an image based on the surrounding pixels — for some time, researchers at NVIDIA have taken the process one step further with deep learning AI.

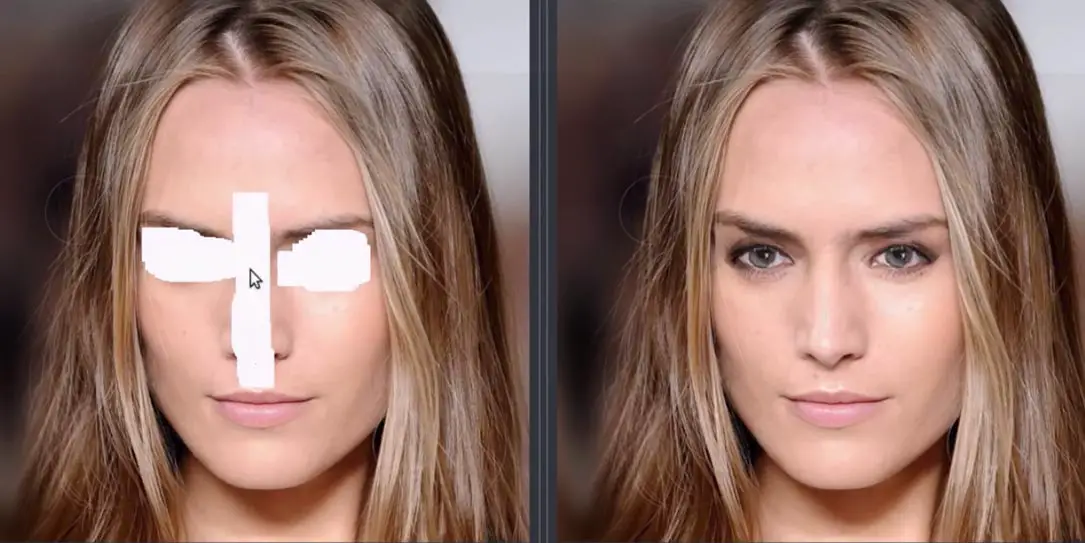

The method, led by researcher Guilin Liu, allows for images to be modified and missing areas to be filled in with some rather surprising and realistic looking computer-generated alternatives. The process has been dubbed “image inpainting” and is already pretty robust in its results

“Our model can robustly handle holes of any shape, size location, or distance from the image borders. Previous deep learning approaches have focused on rectangular regions located around the center of the image, and often rely on expensive post-processing,” the NVIDIA researchers stated in their research paper. “Further, our model gracefully handles holes of increasing size.”

The research was undertaken using NVIDIA Tesla V100 GPUs and the cuDNN-accelerated PyTorch deep learning framework. Using existing datasets, the team generated over 55,000 masks of random streaks and holes. The masks were then introduced to images from the datasets used in hopes that the network would learn how to best reconstruct the missing pixels that were being masked out.

Check out the process in action in the video below.

The process definitely looks very impressive and it would be cool to see features like this added to Photoshop and other image editing applications. As mentioned previously, while similar functions already exist, they are nowhere near as capable as this and can result in distortion and blurriness and are best suited for trying to remove specific issues with an image like text, skin blemishes, or the like. While NVIDIA’s results aren’t perfect, if you didn’t see the before picture you’d be hard pressed in a lot of these cases to tell what was altered without looking very closely.

What do you think about the progress NVIDIA researchers have made in reconstructing corrupted images using deep learning AI? Let us know in the comments below or on Google+, Twitter, or Facebook.

[button link=”https://news.developer.nvidia.com/new-ai-imaging-technique-reconstructs-photos-with-realistic-results/” icon=”fa-external-link” side=”left” target=”blank” color=”285b5e” textcolor=”ffffff”]Source: NVIDIA[/button]