Samsung employees have some of the strictest privacy and secrecy parameters of any tech company. Like Apple, the company wants to keep its sensitive data under wraps. New products, company assets, and development coding are valuable things to be protected and safeguarded.

Estimated reading time: 2 minutes

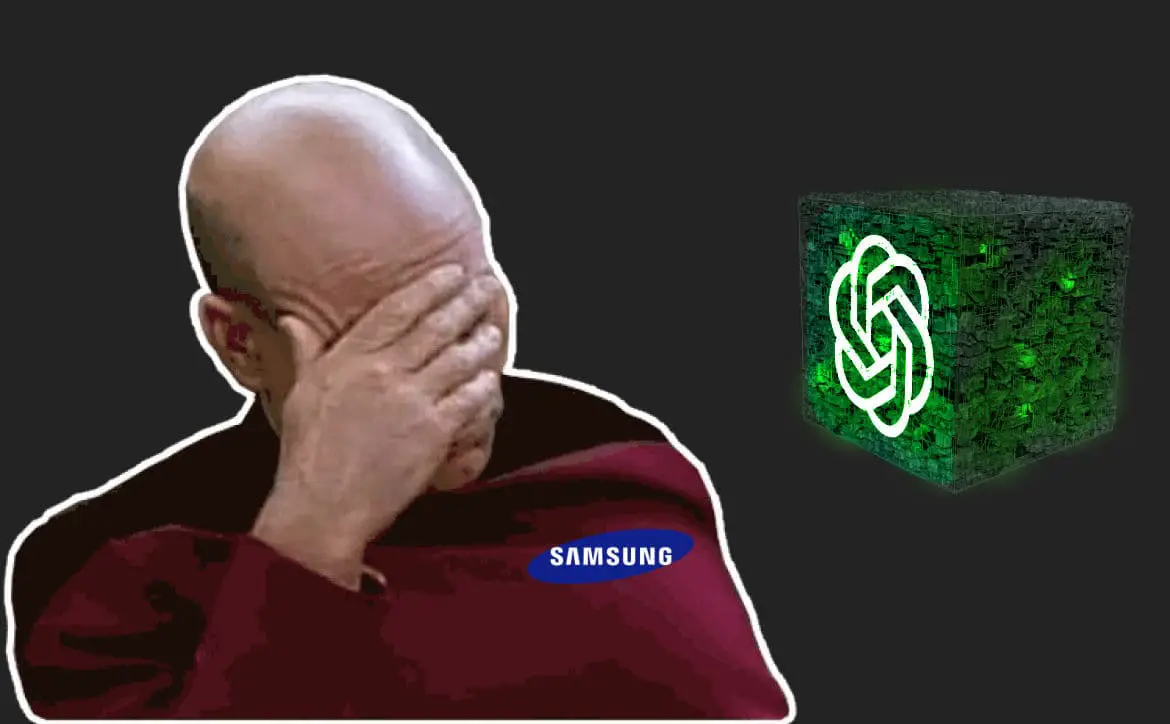

So it’s embarrassing for three Samsung employees who decided to implement OpenAI’s artificial intelligence program, ChatGPT, into their workflow to leak sensitive company data to the AI. While ChatGPT has been a good tool for many to test and play around with. It’s important to remember that using this AI is not a private affair. The data you feed it or ask it to compile is shared with OpenAI to train ChatGPT better to provide better answers and help.

But it doesn’t stop there. The data you share with ChatGPT could be shown to other users if it fits their inquiries. It’s evident that these three Samsung employees did not read the fine print before using ChatGPT to perform some of their tasks.

The report came from The Economist Korea and was spotted by Mashable; according to Engadget, one employee reportedly asked the chatbot to check sensitive database source code for errors, another solicited code optimization, and a third fed a recorded meeting into ChatGPT and asked it to generate minutes.

According to Engadget, Samsung learned about the security problem and has implemented a 1024-character limit to prompts in ChatGPT. The Samsung employees are being internally investigated for the incident, and reports say Samsung is working on its own AI chatbot to prevent future security breaches.

What do you think of this story? Please share your thoughts on any of the social media pages listed below. You can also comment on our MeWe page by joining the MeWe social network. And subscribe to our RUMBLE channel for more trailers and tech videos!